Discover companies you will love

- Senior Data Engineer

- 5 registered

Alta is looking for a Data Engineer to take ownership of various data tools from design to deployment!

on 2022-12-05

379 views

5 requested to visit

Alta is looking for a Data Engineer to take ownership of various data tools from design to deployment!

Full-time

Full-time

Share this post via...

Jean Campanero

Alta Group's members

Jean Campanero

Business (Finance, HR etc.)

What we do

Alta is one of the Southeast Asia’s largest private investment technology platform, building next-generation capital markets infrastructure to increase access and liquidity for entrepreneurs and investors.

At the core of our deal-making expertise lie our proprietary algorithms that syndicate opportunities in private companies and PE/VC funds to our network of strategic investors. This, coupled with the expertise of our investments team, has led us to uncover more than USD6 billion worth of deals for over 14,000 accredited investors from across the globe.

Why we do

By augmenting access and liquidity for entrepreneurs and investors, we accelerate the creation of innovative solutions that will go on to impact our communities and societies at large.

How we do

We are headquartered in Singapore with a growing presence in 4 countries across the Asia Pacific region.

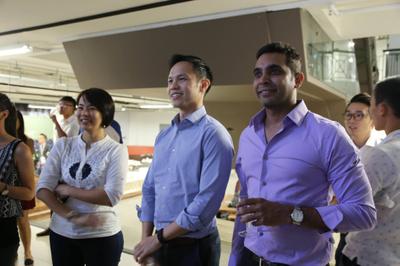

We believe it takes more than commitment to build the most accessible and efficient capital markets. Below are the four beliefs and attitudes that are our non-negotiables — components that make up the DNA of the Alta team:

- Forward

- Fortitude

- Family

- For our Future

/assets/images/11403170/original/e4f77599-b1a1-451b-afeb-4261b81b0534?1670291806)

As a new team member

We are looking for passionate Data Engineers with strong problem-solving skills and prior experience building data infrastructure. You should possess end-to-end data engineering knowledge (dimension modelling to ETL to data warehousing)and the ability to thrive in a fast-paced environment. As a Data Engineer, you would be involved in the agile development cycle and take ownership of various data tools from design to deployment.

Job Responsibilities:

• Working with Data Scientists, Data Engineers, Data Analysts, and Software engineers to build and manage data products and the Fundnel Data Warehouse.

• Designing, developing, and launching extremely efficient and reliable data pipelines.

• Solving issues in the existing data pipelines and building their successors.

• Building modular pipelines to construct features and modelling tables.

• Maintaining data warehouse architecture and relational databases.

• Monitoring incidents by performing root cause analysis and implementing the appropriate action.

• Creating, documenting, and monitoring highly readable code.

• Obtaining and ingesting raw data at scale (including writing scripts, web scraping, calling APIs, writing SQL queries, etc.).

• Conducting and participating in code reviews with peers.

You’ll be an excellent fit for us if you:

• Master's/Bachelor’s degree in Computer Science or any other related field with a minimum of 2 years of IT experience.

• Minimum 5 years experience in designing, building, and operationalising medium to large-scale data integration (structured &unstructured) projects with Data Lake, Data Warehouse, BLOB Storage, and RDBMS using AWS integration services.

• Minimum 3 years of Hands-on Experience in batch/real-time data integration & processing.

• Strong proficiency in handling databases using MySQL, PostgreSQL, and Redshift.

• Solid background in programming languages like Python/Scala/Java etc. Python is a must.

• Build & maintain scalable ETL pipelines using Apache Airflow.

• Prior experience in using Big Data tooling (Hadoop, Spark) and a good understanding of functional programming.

Bonus attributes:

• Interested in working in the small business (startup) environment.

• Possess a strong interest in fintech / financial services.

• Interested in working with data, functions, and characteristics of companies.

• Challenge conventional thinking and push design to achieve functionality and beauty.

• Have a basic understanding of terms in financial statements and financial ratios.

This role is based in India!

If you possess all the above, click "I'm Interested'' today, we want you!

What we do

Alta is one of the Southeast Asia’s largest private investment technology platform, building next-generation capital markets infrastructure to increase access and liquidity for entrepreneurs and investors.

At the core of our deal-making expertise lie our proprietary algorithms that syndicate opportunities in private companies and PE/VC funds to our network of strategic investors. This, coupled with the expertise of our investments team, has led us to uncover more than USD6 billion worth of deals for over 14,000 accredited investors from across the globe.

Why we do

/assets/images/11403170/original/e4f77599-b1a1-451b-afeb-4261b81b0534?1670291806)

By augmenting access and liquidity for entrepreneurs and investors, we accelerate the creation of innovative solutions that will go on to impact our communities and societies at large.

How we do

We are headquartered in Singapore with a growing presence in 4 countries across the Asia Pacific region.

We believe it takes more than commitment to build the most accessible and efficient capital markets. Below are the four beliefs and attitudes that are our non-negotiables — components that make up the DNA of the Alta team:

- Forward

- Fortitude

- Family

- For our Future

As a new team member

We are looking for passionate Data Engineers with strong problem-solving skills and prior experience building data infrastructure. You should possess end-to-end data engineering knowledge (dimension modelling to ETL to data warehousing)and the ability to thrive in a fast-paced environment. As a Data Engineer, you would be involved in the agile development cycle and take ownership of various data tools from design to deployment.

Job Responsibilities:

• Working with Data Scientists, Data Engineers, Data Analysts, and Software engineers to build and manage data products and the Fundnel Data Warehouse.

• Designing, developing, and launching extremely efficient and reliable data pipelines.

• Solving issues in the existing data pipelines and building their successors.

• Building modular pipelines to construct features and modelling tables.

• Maintaining data warehouse architecture and relational databases.

• Monitoring incidents by performing root cause analysis and implementing the appropriate action.

• Creating, documenting, and monitoring highly readable code.

• Obtaining and ingesting raw data at scale (including writing scripts, web scraping, calling APIs, writing SQL queries, etc.).

• Conducting and participating in code reviews with peers.

You’ll be an excellent fit for us if you:

• Master's/Bachelor’s degree in Computer Science or any other related field with a minimum of 2 years of IT experience.

• Minimum 5 years experience in designing, building, and operationalising medium to large-scale data integration (structured &unstructured) projects with Data Lake, Data Warehouse, BLOB Storage, and RDBMS using AWS integration services.

• Minimum 3 years of Hands-on Experience in batch/real-time data integration & processing.

• Strong proficiency in handling databases using MySQL, PostgreSQL, and Redshift.

• Solid background in programming languages like Python/Scala/Java etc. Python is a must.

• Build & maintain scalable ETL pipelines using Apache Airflow.

• Prior experience in using Big Data tooling (Hadoop, Spark) and a good understanding of functional programming.

Bonus attributes:

• Interested in working in the small business (startup) environment.

• Possess a strong interest in fintech / financial services.

• Interested in working with data, functions, and characteristics of companies.

• Challenge conventional thinking and push design to achieve functionality and beauty.

• Have a basic understanding of terms in financial statements and financial ratios.

This role is based in India!

If you possess all the above, click "I'm Interested'' today, we want you!

0 upvotes

Highlighted stories

0 upvotes

What happens after you apply?

- ApplyClick "Want to Visit"

- Wait for a reply

- Set a date

- Meet up

Company info